Table of Contents

Introduction: Future Computing as the Foundation of Tomorrow’s Technology

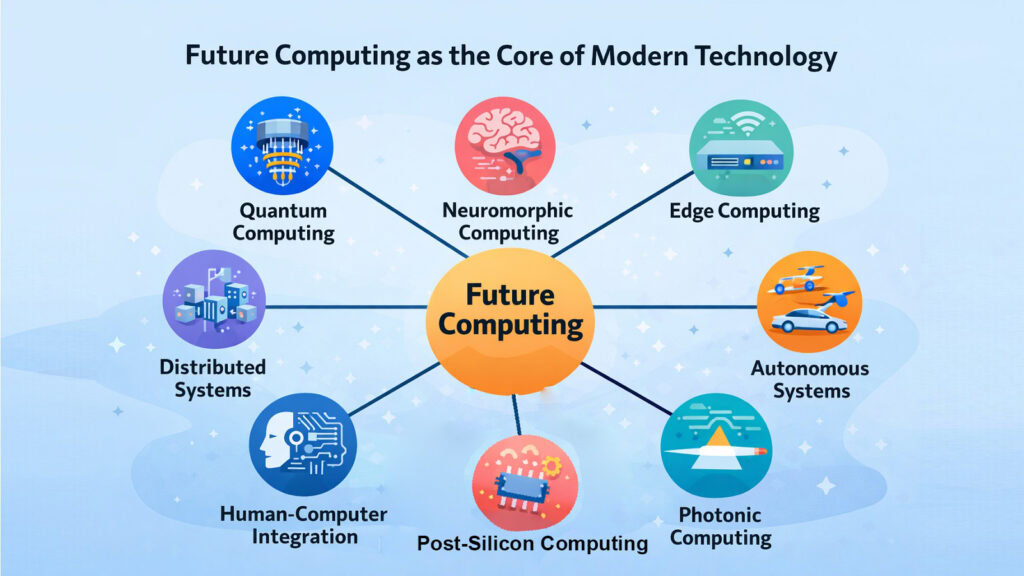

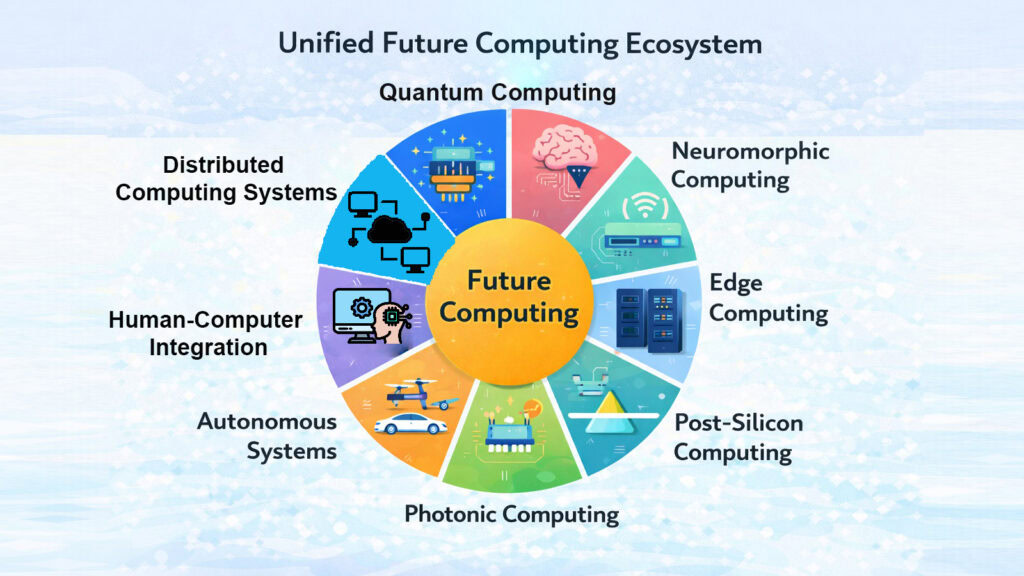

Something is shifting beneath the surface of modern technology. The computers we rely on today are running up against very real walls. Processors cannot keep getting faster the old way. Energy demands are climbing. Data is growing at a pace that traditional systems are struggling to handle. Future computing is the answer to all of this, and it is not one single idea but a whole family of new approaches rising together.

Traditional computing was built on a simple idea: process one instruction at a time, very quickly. That model served us well for decades. But the problems we face now, from modeling climate change to training artificial intelligence to securing global networks, demand something more. Future computing steps in with new architectures, new materials, and entirely new ways of thinking about what a computer can do. Future computing has become a fundamental aspect of modern technology.

This is not just about speed anymore. It is about intelligence built into machines. It is about systems that adapt to problems instead of waiting for instructions. It is about computing that happens at the edge of a network, inside the human body, or at the speed of light. Eight broad trends define this shift, and together they are quietly rewriting the rules of what is possible.

Future Computing: Eight Defining Trends

| Future Computing Trends | Core Idea |

| Quantum Computing | Uses quantum bits to process multiple states at once, enabling solutions to problems classical computers cannot handle efficiently. |

| Neuromorphic Computing | Mimics the structure of the human brain using artificial neurons and synapses for adaptive, low-power processing. |

| Edge Computing Evolution | Moves data processing closer to the source, reducing latency and reliance on centralized cloud infrastructure. |

| Distributed Computing Systems | Spreads tasks across many interconnected machines to improve performance, resilience, and scale. |

| Human-Computer Integration | Bridges biology and technology through interfaces like brain-computer devices, gesture control, and immersive systems. |

| Autonomous Computing Systems | Enables machines to self-manage, self-optimize, and make decisions with minimal human intervention. |

| Photonic Computing | Replaces electrical signals with light to achieve faster data transfer and reduced energy consumption. |

| Post-Silicon Computing | Moves beyond traditional silicon chips using new materials and architectures to extend computational power. |

1. Future Computing and Quantum Computing: Beyond Classical Limits

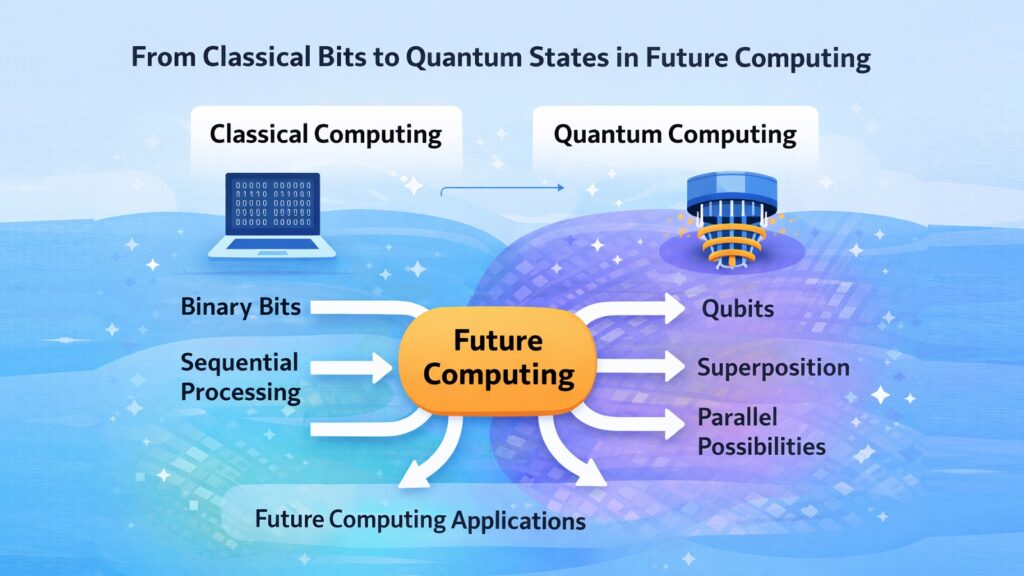

A classical computer thinks in ones and zeros. Every piece of information it processes is either on or off, yes or no. That binary logic is powerful, but it has a ceiling. Quantum computing removes that ceiling by working with units called qubits, which can exist in multiple states at once through a property called superposition.

Think of it this way. A classical computer searching through a maze tries one path at a time. A quantum computer tries many paths simultaneously. This is not a small improvement. For certain types of problems, especially those involving enormous combinations of variables, quantum systems can find answers in minutes that would take classical machines thousands of years.

Companies like IBM, Google, and several government research agencies have been racing to build more stable quantum systems. IBM reached 1,000 qubits with its Condor processor in late 2023. Google claimed quantum supremacy back in 2019 on a narrow task. The hardware is still fragile and error-prone, but progress is steady. Future computing will almost certainly include quantum processors as a specialized but crucial layer for tackling hard scientific and logistical problems.

Future Computing and Quantum Computing: Key Facts

| Aspect | Detail |

| Basic unit | Qubit, which can represent 0, 1, or both simultaneously through superposition. |

| IBM milestone | IBM’s Condor processor reached 1,121 qubits in December 2023. |

| Google milestone | Google’s Sycamore processor performed a calculation in 200 seconds in 2019 that would take classical systems about 10,000 years. |

| Key challenge | Quantum decoherence, where qubits lose their quantum state due to environmental interference. |

| Promising application | Drug discovery, where quantum systems can simulate molecular behavior at an atomic level. |

| Error correction | Quantum error correction remains an active area of research, requiring many physical qubits per logical qubit. |

| Current state | Quantum computers are not yet general-purpose; they excel at specific problem types. |

| Future outlook | Fault-tolerant quantum computing could arrive within the next one to two decades, according to researchers at MIT and NIST. |

2. Future Computing and Neuromorphic Computing: Machines That Think Like Brains

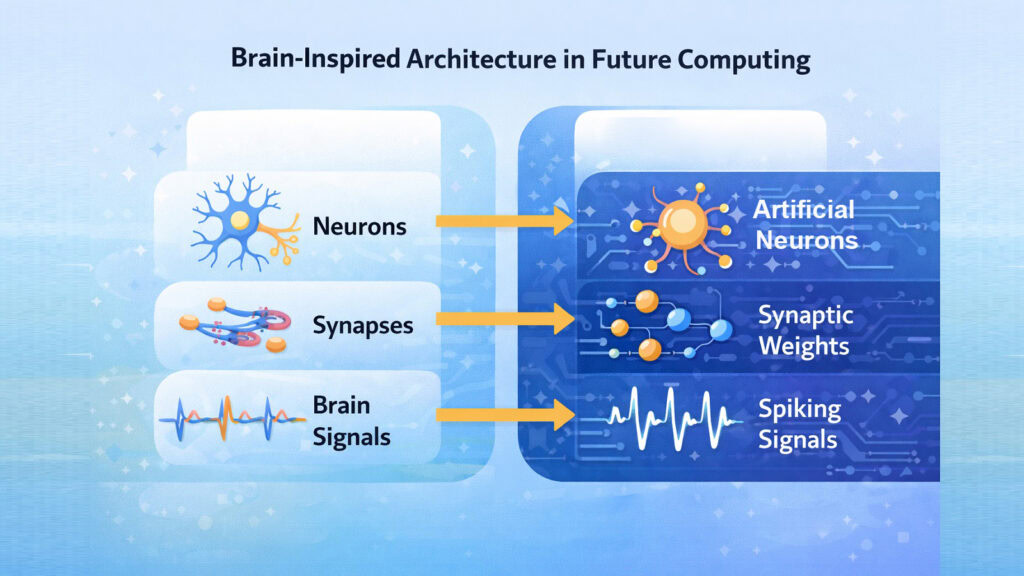

The human brain operates on approximately 20 watts of power. It acquires knowledge through experience, adjusts to new information, and processes sensory data instantaneously. No traditional computer can match that level of efficiency and adaptability. Neuromorphic computing seeks to bridge this gap by creating chips that function more like neurons rather than transistors.

A neuromorphic chip uses artificial neurons that fire signals only when needed, much like biological neurons do. This event-driven approach means the chip is not constantly burning energy waiting for input. It responds to changes, learns patterns, and adjusts its behavior over time. Intel’s Loihi chip, developed since 2017, is one of the most studied examples of this architecture.

The implications for future computing are significant. Devices that need to recognize objects, process speech, or respond to physical environments could run on tiny neuromorphic chips with minimal power. This matters for everything from hearing aids to autonomous robots to smart sensors embedded in industrial equipment. The technology is still maturing, but it represents a genuine rethinking of how computing should be done.

Future Computing and Neuromorphic Computing: Key Facts

| Aspect | Detail |

| Inspiration | The architecture mimics the structure and function of biological neural networks in the human brain. |

| Intel Loihi 2 | Released in 2021, Intel’s second-generation neuromorphic chip contains 1 million artificial neurons. |

| Power efficiency | Neuromorphic chips can be up to 1,000 times more energy-efficient than traditional CPUs for specific AI tasks. |

| IBM TrueNorth | IBM’s TrueNorth chip, released in 2014, contains 4,096 cores and 256 million synapses on a single chip. |

| Learning capability | Neuromorphic systems can perform on-chip learning, adapting without external data retraining. |

| Key application | Edge AI applications such as real-time gesture recognition, speech processing, and robotic motion control. |

| Spike-based processing | Information is transmitted through spikes or pulses, reducing data movement and power use. |

| Research leadership | DARPA’s SyNAPSE program and the Human Brain Project in Europe have both invested in neuromorphic research. |

3. Future Computing and Edge Computing Evolution: Processing at the Source

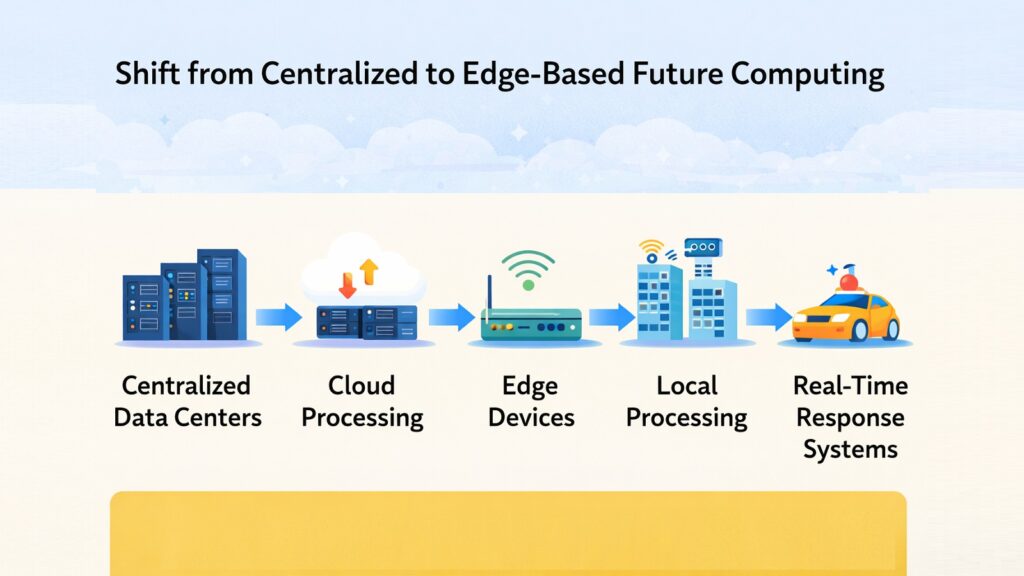

For years, the cloud was the answer to everything. Send your data to a massive server farm somewhere far away, let it process the information, and send the result back. That model worked well enough when data volumes were manageable, and delays were acceptable. But neither of those conditions holds anymore.

Edge computing changes the equation by moving the processing closer to where the data is born. A sensor on a factory floor does not need to send readings to a distant server just to detect an anomaly. A camera in a self-driving car cannot afford to wait for a cloud response. These devices need answers in milliseconds, and edge computing provides that by handling the computation locally or at a nearby node.

The evolution of edge computing is closely tied to the rollout of 5G networks, which offer the low latency and high bandwidth that edge systems need to function well. According to IDC, global edge computing spending was expected to reach around 232 billion dollars by 2024. Healthcare monitors, smart city sensors, industrial robots, and agricultural drones are among the real-world applications already benefiting from this shift.

Future Computing and Edge Computing Evolution: Key Facts

| Aspect | Detail |

| Definition | Processing data at or near the source of generation rather than sending it to a centralized cloud. |

| Latency benefit | Edge processing can reduce response times from hundreds of milliseconds to under 10 milliseconds. |

| Market size | IDC projected global edge computing spending to surpass 232 billion dollars by 2024. |

| 5G connection | 5G networks enable edge deployments by providing high-speed, low-latency wireless connections. |

| Healthcare use case | Wearable heart monitors process data locally to detect irregularities and alert users in real time. |

| Industrial application | Predictive maintenance systems at manufacturing plants use edge nodes to identify equipment failure patterns. |

| Key challenge | Managing security and software updates across thousands of distributed edge devices is complex. |

| Key players | Amazon Web Services, Microsoft Azure, and Google Cloud all offer dedicated edge computing platforms. |

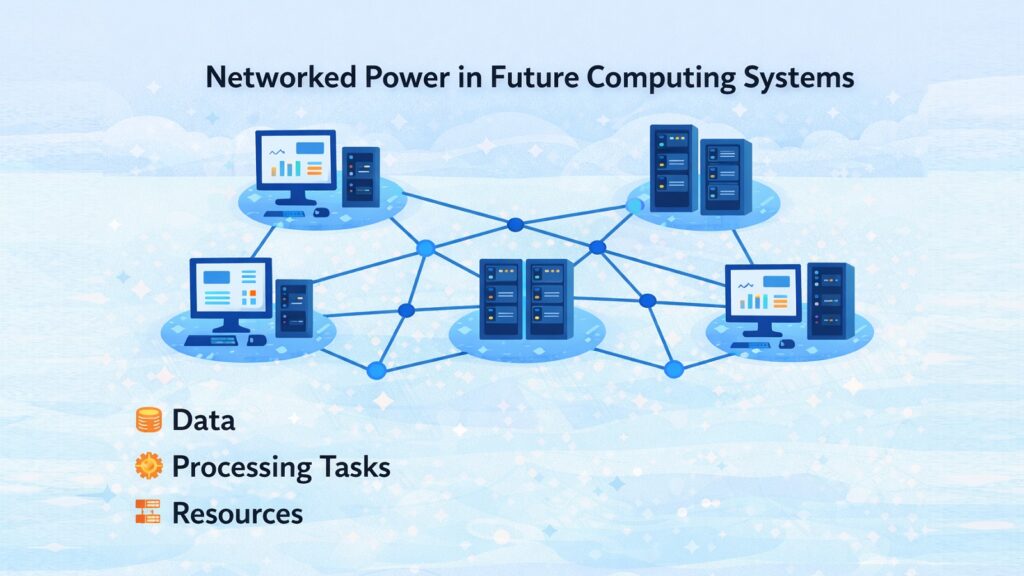

4. Future Computing and Distributed Computing Systems: Power Across Networks

No single machine can hold the whole world’s data or do all of its computing. The internet itself is a kind of answer to that fact, but distributed computing takes the idea further. Instead of one powerful computer working alone, distributed systems spread a task across many machines that cooperate to solve it together.

The practical benefits are substantial. When one machine fails, others keep working. When demand spikes, more machines can be added to share the load. Scientific projects like SETI@home once used this approach to analyze radio telescope data across millions of personal computers donated by volunteers worldwide. Blockchain networks are another modern example, where no single party controls the ledger because every participant holds a copy.

Future computing will rely heavily on distributed architectures because the problems it needs to solve are simply too large for any single system. Training large language models, running global financial systems, processing climate simulations, and managing real-time logistics across continents all require distributed frameworks. Apache Hadoop, Apache Spark, and cloud-native distributed systems from AWS and Google already power much of this today.

Future Computing and Distributed Computing Systems: Key Facts

| Aspect | Detail |

| Core principle | Tasks are divided and executed across multiple interconnected machines working in parallel. |

| Fault tolerance | If one node fails, others continue processing, preventing total system failure. |

| SETI@home | This project distributed astronomical data analysis across over 5 million volunteer computers globally. |

| Blockchain example | Bitcoin’s blockchain operates across tens of thousands of nodes with no central authority. |

| Apache Spark | Apache Spark can process large datasets up to 100 times faster than Hadoop’s original MapReduce framework. |

| Cloud adoption | Major cloud platforms including AWS, Azure, and Google Cloud are built on distributed computing principles. |

| AI training | GPT-4 was trained using thousands of GPUs working in a distributed cluster over several months. |

| Key challenge | Network latency and synchronization between nodes remain significant engineering obstacles. |

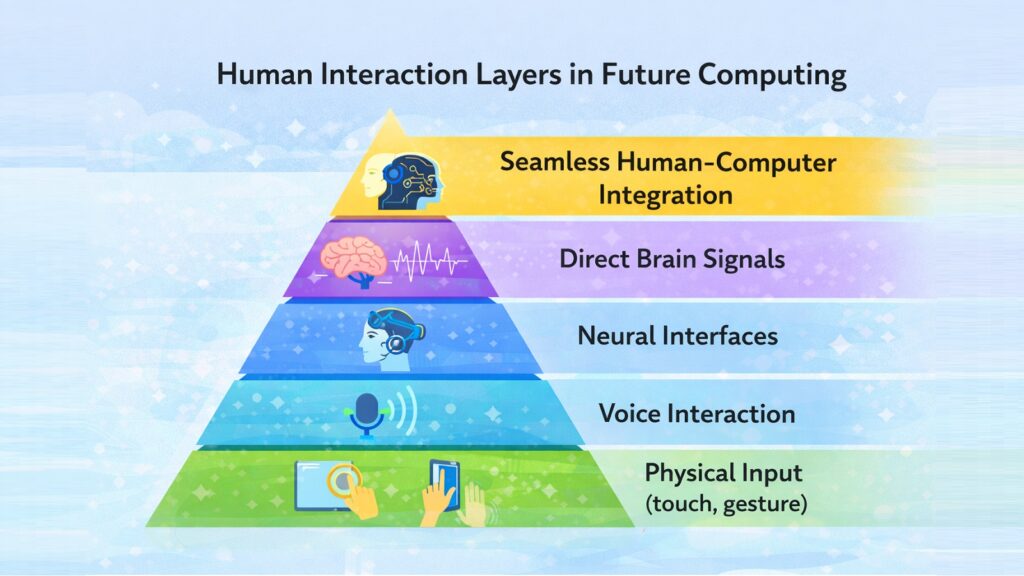

5. Future Computing and Human-Computer Integration: Blending Biology and Technology

Computers used to live on desks. Then they moved into pockets. Now they are beginning to move closer still, toward the human body itself. Human-computer integration is about making the boundary between person and machine less abrupt. It is not science fiction anymore. The pieces are already in place.

Voice interfaces like Amazon Alexa and Apple’s Siri turned spoken words into commands. Gesture-based systems let users control devices with hand movements. Extended reality headsets blend digital content with the physical world. And brain-computer interfaces, which seemed impossibly distant just a decade ago, are entering early clinical trials. Neuralink received FDA approval for human trials of its brain implant in 2023. Synchron, another company in the field, had already implanted its device in human patients in the United States before that.

These developments are not about replacing humans with machines. They are about removing friction in how people interact with technology. A surgeon who can control instruments through thought rather than a handheld tool. A person with paralysis who can type using only neural signals. A worker in a warehouse who receives guidance through an augmented reality overlay rather than a printed manual. Future computing is being shaped around human needs in a much more literal sense.

Future Computing and Human-Computer Integration: Key Facts

| Aspect | Detail |

| Brain-computer interface | Neuralink received FDA approval for human clinical trials in May 2023. |

| Synchron milestone | Synchron implanted its Stentrode brain device in US patients in 2022, enabling text typing through neural signals. |

| Voice assistants | Amazon Alexa alone supports over 100,000 third-party skills, showing the scale of voice-based integration. |

| Augmented reality | Apple’s Vision Pro headset, released in 2024, uses eye tracking, hand gestures, and voice for full computer control. |

| Cochlear implants | Over 700,000 people worldwide use cochlear implants, one of the earliest successful human-computer integrations. |

| EEG-based control | Non-invasive EEG headsets allow users to control simple software interfaces using brainwave patterns. |

| Haptic feedback | Haptic gloves and suits are being developed to allow users to feel digital objects in virtual environments. |

| Medical use case | BrainGate research has enabled paralyzed individuals to move robotic arms using neural implants since 2004. |

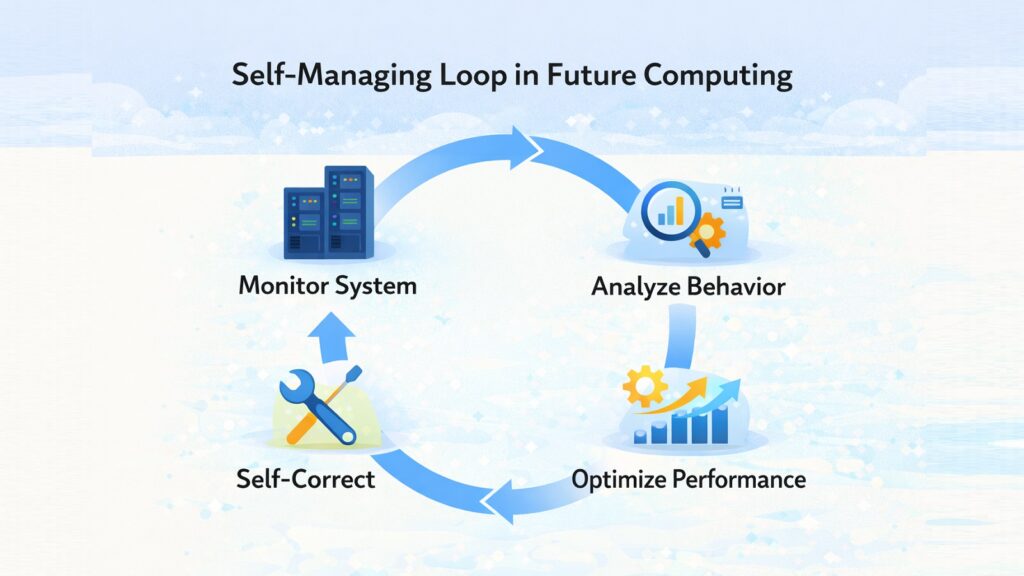

6. Future Computing and Autonomous Computing Systems: Self-Managing Machines

One of the quieter but more consequential shifts in future computing is the move toward systems that manage themselves. Traditional computing required human administrators to monitor performance, patch problems, allocate resources, and respond to failures. As systems grow more complex, that approach becomes unsustainable.

Autonomous computing draws on concepts that IBM outlined in its autonomic computing initiative back in 2001. The goal was to build systems with four core self-managing properties: self-configuration, self-healing, self-optimization, and self-protection. Today, these ideas are becoming real through machine learning and AI-driven operations tools.

Cloud platforms already use autonomous scaling to add or remove servers based on real-time demand. AIOps platforms monitor IT infrastructure and flag anomalies before they cause outages. Autonomous databases like Oracle’s Autonomous Database tune themselves, back themselves up, and patch their own security vulnerabilities without human direction. This frees engineers to focus on higher-level problems while the infrastructure handles its own upkeep.

Future Computing and Autonomous Computing Systems: Key Facts

| Aspect | Detail |

| Origin | IBM’s autonomic computing initiative in 2001 defined self-configuration, self-healing, self-optimization, and self-protection as core goals. |

| Cloud auto-scaling | Amazon EC2 Auto Scaling can add or terminate server instances within minutes based on traffic patterns. |

| Oracle Autonomous Database | Released in 2018, it applies machine learning to automate tuning, backups, and security patching. |

| AIOps | AIOps platforms use AI to correlate alerts and predict IT failures before they impact users. |

| Self-healing networks | Telecommunications companies use autonomous systems that reroute traffic around failures within seconds. |

| Kubernetes | Kubernetes, used by 84% of large enterprises according to CNCF surveys, automatically manages containerized workloads. |

| Security automation | Autonomous threat detection systems can identify and isolate cyberattacks in real time without human input. |

| Challenge | Autonomous systems can make unexpected decisions; ensuring explainability and oversight remains a key research concern. |

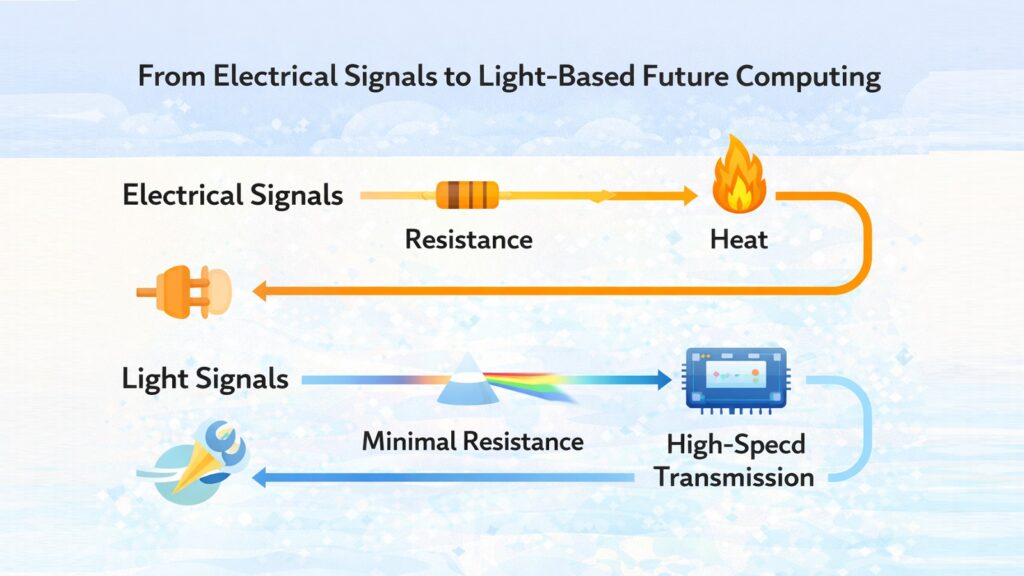

7. Future Computing and Photonic Computing: Processing with Light

Electricity moves fast, but light moves faster. Photonic computing is built on the idea that using photons instead of electrons to carry and process data could unlock a new level of performance. The concept has been in research for decades, but it is now approaching practical application in a way that was not possible before.

The bottleneck in modern processors is not just the speed of computation. It is the heat generated and the energy consumed when electrons move through wires. Photons do not have this problem in the same way. They travel through optical fibers or waveguides with far less resistance and generate much less heat. Data centers, which already rely on fiber optics for communication between servers, are a natural target for photonic integration.

Companies such as Lightmatter and Luminous Computing are engaged in the development of photonic chips tailored for AI applications. Intel has created silicon photonics technology that incorporates optical components directly onto a chip. In 2017, researchers at MIT showcased an optical neural network chip that executed computations using light instead of electricity. These represent initial advancements, yet the trajectory is evident. Future computing could potentially depend on light as its main medium.

Future Computing and Photonic Computing: Key Facts

| Aspect | Detail |

| Core idea | Uses photons instead of electrons to transmit and process data, enabling faster and more energy-efficient computing. |

| Speed advantage | Light travels roughly 200 times faster in optical fiber than electrons in copper wire. |

| Intel silicon photonics | Intel has shipped over 5 million silicon photonic transceivers, demonstrating commercial viability of optical interconnects. |

| MIT optical neural network | MIT researchers demonstrated an optical neural network in 2017 running computations at the speed of light. |

| Lightmatter | Lightmatter’s photonic chip uses light-based matrix multiplication, critical for deep learning workloads. |

| Energy benefit | Photonic interconnects can reduce data center energy consumption for communication tasks by up to 50%. |

| Key challenge | Integrating photonic components with existing electronic systems at scale remains a manufacturing challenge. |

| Data center use | Major hyperscale data centers already use photonic interconnects between racks to manage bandwidth demands. |

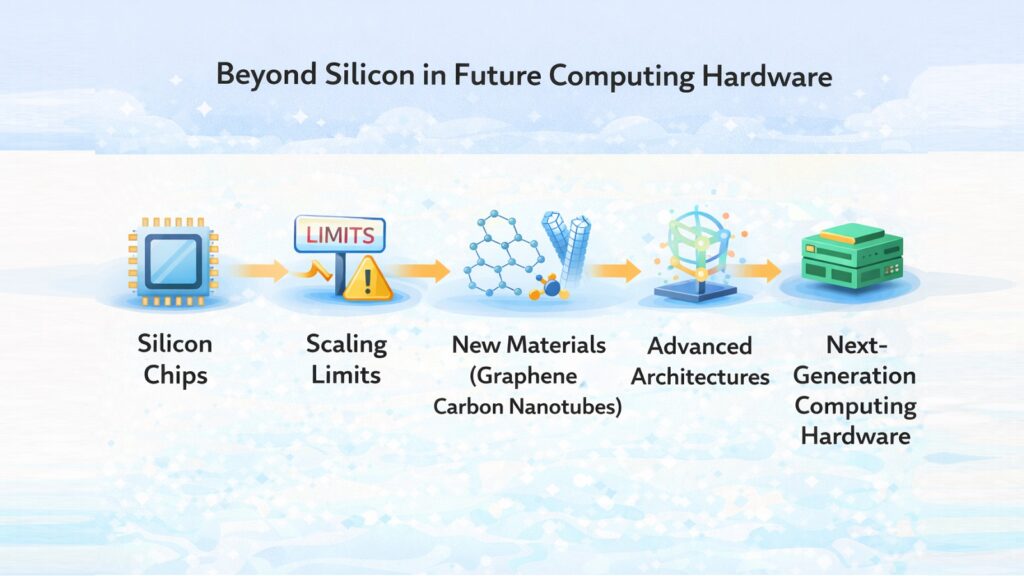

8. Future Computing and Post-Silicon Computing: Moving Beyond Traditional Chips

Silicon has powered computing for over half a century. It is cheap, abundant, and well understood. But it is also approaching its physical limits. Transistors built on silicon are now just a few atoms wide. Making them smaller without introducing errors is becoming exponentially harder, and the industry knows it.

Post-silicon computing refers to the search for materials and architectures that can carry computing forward when silicon runs out of room. Graphene, a single layer of carbon atoms arranged in a hexagonal lattice, conducts electricity far better than silicon and generates less heat. Carbon nanotubes have shown promise as transistor materials. Gallium nitride and gallium arsenide are already used in power electronics and high-frequency applications.

Beyond materials, entirely new computing architectures are being explored. Three-dimensional chip stacking places layers of processors and memory directly on top of each other, shortening the distances signals must travel. Chiplet designs break monolithic processors into smaller modular pieces that can be mixed and matched. The transition away from silicon will not happen overnight, but the groundwork is being laid. Future computing hardware will look quite different from the chips inside today’s devices.

Future Computing and Post-Silicon Computing: Key Facts

| Aspect | Detail |

| Silicon limit | Transistors now reach below 3 nanometers; further miniaturization faces quantum tunneling and heat dissipation issues. |

| Graphene properties | Graphene conducts electricity 100 times faster than silicon and has higher electron mobility than any known material. |

| Carbon nanotubes | IBM demonstrated functional carbon nanotube transistors as small as 1.4 nanometers, smaller than silicon equivalents. |

| Gallium nitride (GaN) | GaN chips handle higher voltages and temperatures than silicon, already used in fast-charging adapters and RF systems. |

| 3D chip stacking | AMD’s 3D V-Cache technology stacks memory directly on the processor die, improving bandwidth and performance. |

| Chiplet design | AMD, Intel, and Apple all use chiplet-based designs to combine multiple smaller chips into one package. |

| TSMC roadmap | TSMC has outlined a path toward 2-nanometer silicon chips while also investing in beyond-silicon research. |

| Post-silicon timeline | Most researchers expect practical post-silicon transistors to enter commercial production between 2030 and 2040. |

Conclusion: Future Computing as the Driving Force of Next-Gen Innovation

None of the eight trends discussed here will change the world on its own. Quantum computing needs neuromorphic systems to handle learning tasks more efficiently. Edge computing depends on distributed frameworks to coordinate data across many nodes. Human-computer integration becomes more powerful when autonomous systems manage the complexity behind the interface. These threads are woven together, not separate.

The industries most likely to feel the shift first are healthcare, energy, manufacturing, and logistics. Drug discovery timelines could shrink dramatically with quantum simulation. Power grids could become self-managing through autonomous computing. Factories could run continuous predictive maintenance through edge-connected sensors. Patients could receive real-time health monitoring through brain-adjacent devices. The list goes on.

What is easy to miss in all this is the human dimension. Computing has always been about extending what people can do. Future computing does not change that. It just extends it further, into territory that still feels unfamiliar. The pace of change can feel unsettling. But underneath all the technical language and the research papers, this is still just people trying to build tools that work better than the ones they have now. That impulse is old and steady, even when the technology is not.

Future Computing: Eight Trends and Their Nearest-Term Impact

| Trend | Nearest-Term Real-World Impact |

| Quantum Computing | Accelerating drug discovery and optimizing complex logistics networks beyond classical capabilities. |

| Neuromorphic Computing | Enabling ultra-low-power AI in hearing aids, prosthetics, and industrial sensor networks. |

| Edge Computing Evolution | Supporting autonomous vehicles and real-time industrial monitoring without cloud dependency. |

| Distributed Computing Systems | Powering global AI model training and decentralized financial infrastructure at scale. |

| Human-Computer Integration | Restoring motor function and communication for paralyzed individuals through brain-computer interfaces. |

| Autonomous Computing Systems | Reducing IT operational costs through self-healing infrastructure and AI-driven resource management. |

| Photonic Computing | Reducing data center energy consumption for high-bandwidth AI workloads through optical interconnects. |

| Post-Silicon Computing | Extending transistor density and performance for another generation through 3D stacking and chiplet designs. |